React SEO Best Practices to Follow

The correlation between a website’s ranking and the quantity of traffic it receives is beneficial, indicating that higher rankings are associated with more traffic. Consequently, higher search engine rankings also enhance the potential of converting leads. When discussing the most effective JavaScript libraries and frameworks for enhancing the search engine optimization (SEO) capabilities of a web app, React JS emerges as a prominent choice.

With the help of our Reactjs developers, we aim to explain the primary factors influencing the ranking of ReactJS website, the SEO difficulties associated with developing a website in React , and the best ReactJS SEO practices for addressing these obstacles to enhance its search engine optimization (SEO) capabilities.

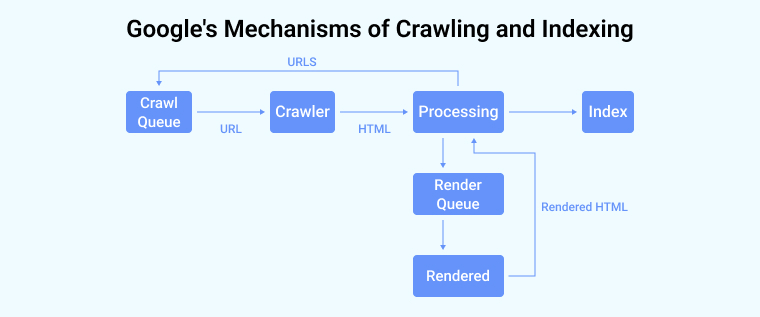

1. How Google Crawls and Indexes Web Pages?

Given that Google dominates the Internet search market with a market share of over 90%, it is necessary to delve deeper into its mechanisms of crawling and indexing.

The process of Google indexing can be broken down into several steps:

- The Googlebot algorithm manages a crawl queue that encompasses all the Uniform Resource Locators (URLs) it is required to traverse and index at a later time.

- During periods of inactivity, the crawler selects the subsequent URL from the queue, initiates a request, and retrieves the HTML pages.

- Upon analyzing the HTML file, Googlebot ascertains if it is necessary to get and perform JavaScript in order to present the content. If the answer is positive the Uniform Resource Locator (URL) is appended to a queue for rendering Javascript.

- Subsequently, the renderer HTML page retrieves and runs JavaScript code in order to render the web page, and subsequently transmits the produced HTML content back to the processing unit.

- The processing unit retrieves the URLs’ tags stated on the React website and subsequently includes them in the crawl queue.

- Then the information is incorporated into Google’s index.

It is evident that a significant separation exists between the processing stage, which is responsible for parsing HTML files, and the renderer stage, which is responsible for executing JavaScript. The disparity above arises due to the high computational cost associated with the execution of JavaScript, since Googlebots are required to process a vast quantity of trillions of websites. When Googlebot examines web applications, it promptly analyzes the HTML content and subsequently schedules the execution of JavaScript code for a later stage. According to the documentation provided by Google, a web page typically remains in the render queue for a duration of a few seconds, however, it is possible for this duration to extend beyond that timeframe.

The idea of a crawl budget is also noteworthy. Google’s crawling procedure is limited by things like connectivity, time, and the number of available Googlebot units. The process involves the allocation of a designated budget or resources for the purpose of indexing each individual website. In the case of building web applications with a significant volume of material, such as an e-commerce platform including hundreds of web pages, the extensive use of JavaScript for content rendering may impede Google’s ability to effectively access and interpret a substantial portion of the content hosted on the website.

2. What are the Most Common SEO Issues with React?

When engaging in search engine optimization (SEO) using ReactJS, it is inevitable to encounter many challenges that hinder the optimization process from reaching an optimal state. Here are some of the common SEO issues with React:

2.1 Slow and Complex Indexing

In the context of JavaScript, the initial step performed by Googlebot is the retrieval of HTML data from the webpage, followed by the subsequent queuing of JavaScript execution for deferred processing. The inclusion of these factors contributes to an increase in the duration required for indexing the corresponding web pages, hence impacting the overall SEO ranking of your React website.

2.2 Handling of JavaScript Errors

In the context of error handling while rendering, HTML and JavaScript exhibit distinct methods. The JavaScript parser has limited error-handling capabilities, and the occurrence of even a single fault can result in unsuccessful indexing. This behavior occurs in JavaScript where, during script execution, the parser halts instantly upon encountering an error.

In the event that the search bot is in the process of indexing a web page, it can come across a situation where the webpage is indexed as a blank page.

2.3 Exhausted Crawling Budget

The Googlebot is allocated a specific crawling budget due to the resource-intensive nature of the crawling operation. This metric denotes the upper limit of web pages that are traversed by Googlebot within a certain time frame.

If the Googlebot experiences excessive delays in JavaScript execution on React webpages, it can deplete its allocated crawling resources. The browser will go to the subsequent webpage or website without including the page in the issue in its index.

2.4 Indexing of SPAs

React enables the development of single-page applications (SPAs) characterized by their rapid performance, prompt responsiveness, and dynamic generation.

However, single-page applications (SPAs) provide a challenge in terms of search engine optimization (SEO). Single-page apps (SPAs) have the capability to display their whole content only after the completion of all loading processes. In the event that Googlebot begins the crawling process of a webpage prior to the complete loading of its content, the search bot will see the page as empty of any kind of data. A significant portion of the website will not undergo indexing, resulting in a detrimental impact on the search engine optimization (SEO) ranking of the site.

3. React SEO Best Practices?

3.1 Static Site Generation (SSG)

The SSG (Static Site Generator) produces a rendered version of a React-based online application, which consists of HTML, CSS, and JavaScript files. The delivery of these resources to the user’s browser can occur without the requirement of server-side rendering or intricate client-side JavaScript configuration.

It’s perfect for creating search engine-optimized web apps with React. By making the page’s information more accessible and understandable to search engine crawlers, SSG boosts a website’s rankings.

In order to execute Static Site Generation (SSG), the React application is constructed as a collection of static files and subsequently published to either a web server or a content delivery network (CDN). This technique provides advantages such as enhanced loading speed and heightened security, as it eliminates the requirement for server-generated page generation upon request.

Gatsby, Next.js, and React-static are just a few examples of React static-site generators that may help with SSG for React web projects. These resources allow developers of React web apps to create and publish static websites with features like quick reloading, code splitting, and data management.

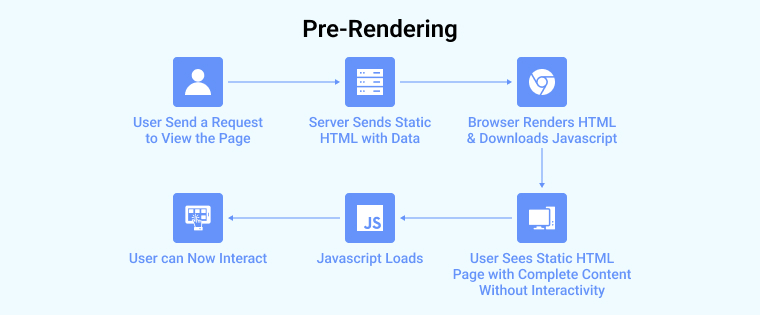

3.2 Pre-Rendering

The application of pre-rendering is a recommended best practice to enhance the search engine optimization (SEO) of your React based website, since it can provide immediate benefits.

The term “pre-rendering” pertains to the act of intercepting crawling requests made by search bots and delivering a cached static HTML version of a website as a pre-rendered static page. Pre-renderers often load websites in the event of a user-initiated request rather than a bot-initiated request.

Pre-rendering offers several advantages for enhancing the indexing of a website:

- Pre-renderers demonstrate compatibility with the most recent advancements in web technologies.

- The platform is capable of processing many forms of contemporary JavaScript and converting it into static HTML files.

- There is a little or negligible need for modifications to the existing codebase.

- The implementation process is very basic.

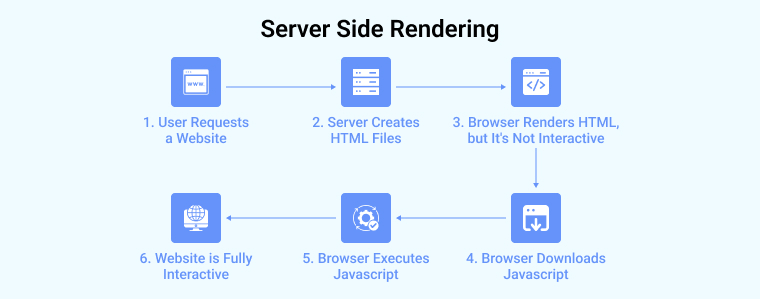

3.3 Server-Side Rendering (SSR)

As shown in the above image, Server-side rendering (SSR) is a method employed to pre-compute the initial state of a React-based web application on the server prior to its rendering on the client side. Supporting the indexing of web page content by search engines improves the SEO value of the React application.

Server-side rendering (SSR) involves the generation of the initial HTML file and JavaScript code for a React web page on the server, which is then transmitted to the client. This enables search engine crawlers to parse and understand the page’s content, even in cases where JavaScript is not performed on the user’s device.

To facilitate the implementation of server-side rendering (SSR), one can employ JS libraries such as Next.js, which is purposefully developed for constructing React web apps with SSR capabilities. The platform offers developers an API that is straightforward and intuitive, effectively managing the intricacies of server-side rendering (SSR) in a systematic way.

3.4 Using React Helmet

React Helmet is a library that enables users to modify meta tags on their website, including title tags and meta descriptions, with the intention of enhancing search engine optimization (SEO) performance. The dynamic alteration of meta tags based on the content of individual web pages might enhance their visibility in search engine results pages.

One potential benefit of using SEO techniques is the ability to assign distinct meta titles and descriptions to individual web pages. This practice has the potential to enhance click-through rates and increase the visibility of these pages to users seeking relevant content.

Here’s the example of using React helmet in React web app:

import React from "react"; import { Helmet } from "react-helmet/es/Helmet"; import ProductList from "../components/ProductList"; const Home = () => { return ( <React.Fragment> <Helmet> <title>title</title> <link rel="icon" href={"path"} /> <meta name="description" content={"description"} /> <meta name="keywords" content={"keyword"} /> <meta property="og:title" content={"og:title"} /> <meta property="og:description" content={"og:description"} /> </Helmet> <ProductList /> </React.Fragment> ); }; export default Home; |

3.5 Avoid Hashed URLs

This matter is not frequently encountered in the context of React; nonetheless, it is imperative to refrain from using hash URLs such as the one provided below:

In general, it is unlikely that Google would index or consider any content that appears after the hash symbol (#). The entirety of these webpages will be perceived as accessible using the secure hypertext transfer protocol (HTTPS) on the domain.

In order to facilitate page changes in single-page applications (SPAs) that utilize client-side routing, it is advisable to incorporate the History API. It is feasible to do this task using both React Router and Next.js in an easy way.

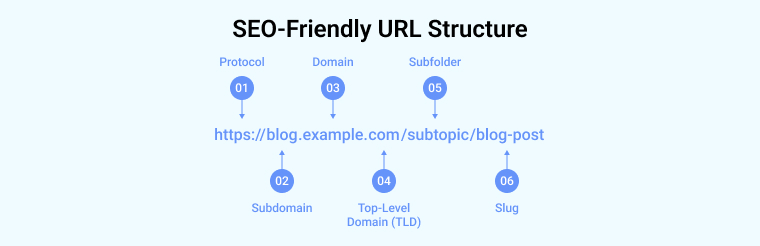

3.6 URL Case

The Google bots consistently treat some websites as distinct entities when their URLs have variations in capitalization, such as having lowercase or uppercase letters (e.g., “/Invision” and “/invision”).

The distinction in the case between these two URLs will result in their differentiation. To mitigate these prevalent errors, it is advisable to consistently produce URLs in lowercase format.

Here’s an example on how an SEO friendly URL looks like:

3.7 Using href Links

Another common error in the process of modifying URLs in Single Page Applications (SPAs) is the utilization of <div> or <button> components instead of the appropriate <href> element. The concern relates more to the utilization of ReactJS rather than the shortcomings within the framework.

Additionally, this approach has the potential to negatively impact your SEO score. When Googlebot does a crawl on a given URL, it actively seeks for other URLs to crawl by examining the contents of <href> elements. In the event that the <href> element is not detected, the crawling process will be unable to proceed with the retrieval of any URLs.

Therefore, it is advisable to incorporate <href> links to the desired URLs on your website in order to facilitate their discovery by search engines.

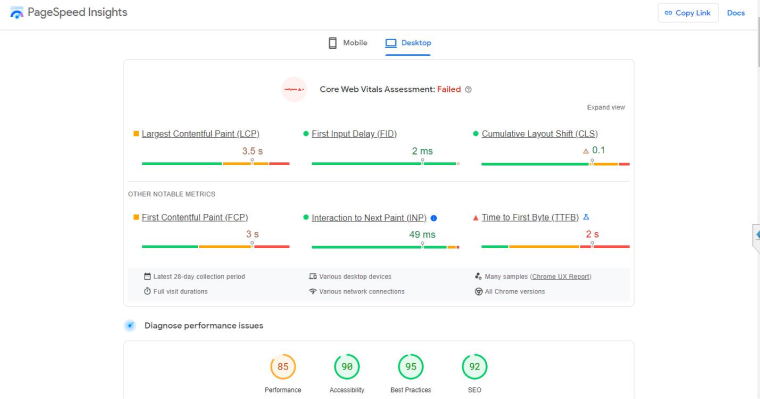

3.8 Optimizing Speed

Website loading times are a factor in how well a web page performs in search engine results. The presence of a webpage that takes a considerable amount of time to load might generate an unfavorable perception among users and can have a detrimental impact on the search engine ranking of the corresponding website.

In order to enhance the efficiency of a React web application, it is possible to utilize technologies such as google page speed insights to discover and address performance concerns. Moreover, the implementation of the best SEO practices mentioned above can facilitate the loading process by selectively loading just the essential React components required for a particular page, as opposed to loading the complete program in all its parts simultaneously.

4. Conclusion

There are other factors to be taken into account while optimizing React based websites, however, it is crucial not to overlook a few essential principles that consistently hold true. Professionals with expertise and experience should incorporate SEO best practices such as semantic HTML, mobile-first development, XML sitemaps, and other relevant techniques.

5. FAQs

5.1 Is React good for SEO?

React library is not designed with a focus on optimizing for search engine optimization (SEO). ReactJS is a very proficient library for constructing intricate websites and apps with pleasing user interfaces. If one intends to develop a web application using React, it is necessary to utilize dependencies and libraries in order to enhance the search engine optimization of the project.

5.2 Does React helmet improve SEO?

React Helmet is a reusable React component that is used to update the head section of the document page. With React helmet implementation you can easily update the meta tags of your web page which ultimately helps you to improve SEO.

5.3 How do I Optimize SEO with React?

Follow these steps to fix SEO optimization in React:

- Pre-Rendering

- Pick the right rendering strategy

- Avoid hashed URLs

- Server-side rendering (SSR)

- Using React Helmet

- URL case

- Avoid lazy loading essential HTML

- Using href Links

Hardik Dhanani has a strong technical proficiency and domain expertise which comes by managing multiple development projects of clients from different demographics. Hardik helps clients gain added-advantage over compliance and technological trends. He is one of the core members of the technical analysis team.

In this article, we will discuss examples of the best Open Source VueJS web applications currently available. And focus on the efficacy of the modern,...

Dec 28, 2023

Dec 28, 2023

Comments

Leave a message...